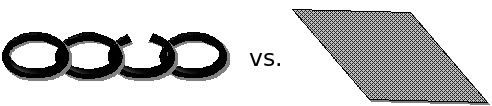

When explaining the desired properties of a security system, I often use the metaphor of a mesh versus a chain. A mesh implies many interdependent checks, protection measures, and stopgaps. A chain implies a long sequence of independent checks, each assuming or relying on the results of the others.

With a mesh, it’s clear that if you cut one or more links, your security still holds. With a chain, any time a single link is cut, the whole chain fails. Unfortunately, since it’s easier to design with the chain metaphor, most systems never last when individual assumptions begin to fail. There are surprisingly few systems that are designed with the mesh model, despite it clearly being stronger.

An example of a chain design is SSL accelerators that implement “split termination.” In this system, the server’s private key is moved to a middle box that decrypts the SSL session, load balances it or modifies it in some way, and then re-encrypts the data with the server’s public key and delivers it.

There are a number of weaknesses in this design beyond the obvious one that the data is in the clear while being processed by the SSL appliance. If you keep your private key in an HSM, you’ve just downgraded its security to “stored in flash and RAM of a front-end network device.” Also, now there are two places to compromise the key. Even if both offered equal security but different implementations (i.e., different bugs), you’ve reduced the private key’s security by 50% because an attacker can choose between the two targets based on their bag of exploits. But obviously, they do not offer equal security since most network devices do not include an HSM for cost reasons.

Another weakness is insertion or downgrade attacks. Insertion attacks involve the attacker connecting to the middle box and sending data to the server. The SSL appliance happily encrypts the data and delivers it to the server. If your fraud-prevention software checks for potentially phished connections by checking SSL parameters, the middle box just lied to it about the original level of security. Because the fraud prevention application’s original assumption that the other endpoint is a client was violated, it no longer can say anything meaningful about the client’s SSL connection. A downgrade attack is similar in that SSL v2 (which had trivial flaws) might be allowed on the client side but this wouldn’t show up in the connection on the server side.

The long chain of independent links introduces many potential or actual flaws. Each link has to be checked for each flaw that exists (m * n), and a failure of any link fully compromises the data.

An example of a mesh design is TLS/SSLv3 session key derivation. The two aspects that are particulary nice are the cipher downgrade prevention and combined HMAC algorithm. In SSLv2, the per-session cipher could be downgraded by a man-in-the-middle. When each side advertised its crypto capabilities, he could lie to both, saying that neither one had anything very strong and neither side could detect that this occurred. Each message in the protocol was like a link in the chain, depending on the values of the previous ones without any way to verify the previous messages hadn’t been tampered with.

SSLv3 changed this by making each side hash all the messages it had seen and use the hash as part of the generated session key. If an attacker deleted or modified messages (i.e., to reduce the advertised list of ciphers to the weakest possible), the session key for one or both sides would be incorrect. This would be detected when the Finished message was received and then both would drop the connection or try again.

The hashing primitive used for deriving keys in SSLv3 is a bit strange. It is similar to the modern HMAC construction but uses two separate hash functions, MD5 and SHA-1. At the time, this seemed insane since an attacker would have to perform a pre-image attack (even harder than a collision attack) against the hash function to find a result that would allow him to insert or modify handshake messages. Paul Kocher, the author of SSLv3, chose to use an HMAC that combined two different hash functions so that an attacker would have to find a pre-image for both. This seemed like it was going too far, increasing complexity in an area that was already quite safe. Implementers also complained at having to include two hash functions.

When the collision in MD5 and then an expected collision in SHA-1 appeared a few years ago, it didn’t seem so crazy although SSLv3 would still be secure even if it only used one hash function. I recently asked Paul why he was so focused on hash functions back in 1996 and he said, “I just didn’t trust them. MD5 hadn’t had much scrutiny and SHA-1 was brand new. So I took a conservative approach.” Of course, the genius is in knowing where it is most necessary to apply mesh principles to a design.

I have been thinking about these sorts of mechanisms as well although my working title of “designing reinforcing security mechanisms” certainly does not have the ring of “mesh design”. What got me started were looking at well designed hardware security mechanisms (HSMs and the like). There you often times have protection mechanisms that can individually be defeated but that work together and complement each other such that the whole is far stronger than the individual mechanisms. An example would be an accelerometer linked to a tamper alarm. By itself it pretty easy to defeat, but if you use it in conjunction with a hard encasement it becomes far more effective as an attacker would have a high probability of triggering an alarm while cutting through the device.

Your example of SSL makes the need for such designs very clear. In fact the modifications made to TLS to more rely on the security of SHA-1 in the key generation function in the face of the mounting discontent for MD5 is further evidence of this.

What would be nice is a way to model or at least formalize these relationships when designing security mechanisms and performing threat modeling.

Whilst I agree with your sentiment, I don’t necessarily think the example is correct.

Terminating the SSL before the final consumer of the data adds extra vulnerabilities (as listed in your post) and hence risk, however it may reduce

the risk to the overall _system_ because:

* The risk of any SSL implementation flaws being exploited may be lower in the appliance than in the server;

* In the event that an SSL flaw is exploited:

* The damage done (i.e asset value) of having the SSL appliance compromised will likely be lower than the damage in having the server compromised;

* The risk of compromise of associated assets on the server (for example: application code/logic, database, OS) will be lower with an SSL appliance.

* Adding the SSL appliance (i.e proxy) to the design will allow security tools to inspect/alter the traffic (which may end up lowering the risk of application compromise).

(ofcourse this can conceivably be done on the host without the need for an extra SSL layer).

e, let me address each of your bullets by adding what I think is the hidden assumption.

* … because no appliances use OpenSSL like the server does. And appliances that use a home-grown implementation are less likely to have flaws than a publicly-reviewed codebase that is 10x older and used in 1000x more systems.

* … because the appliance sees all the decrypted SSL traffic for all the servers behind it, but it’s more valuable to see the data on a single server

* … because other stuff on the server like the application itself is more valuable than the data that was encrypted with SSL

* … because seeing what’s going on for the few malicious connections is more important than protecting the data of the millions of legitimate connections

I can only reply with a confused “huh???”

Nate, thanks for the reply.

The answer to the risk equation is really going to depend on the specifics of the system in question. In reply to your points:

* I’m not assuming that the appliance will be more trustworthy. I’m just pointing out that it is _possible_ that it may be harder to exploit.

This will obviously depend on the specific implementation and is something the security architect will need to evaluate.

* I was actually assuming that the servers would be homogenous enough that if you could exploit one that you could exploit all.

* Possibly. The amount of confidential data in flight may be a small drop in the ocean compared to the data that a full application

compromise could lead to (i.e all data that the application has access to).

* Possibly. I don’t give much credence to trying to detect attacks, but the ability to detect a compromised connection could be invaluable in reducing the damage done.

I’m not trying to defend the usefulness of appliances by the way.

Using an SSL appliance will likely add more risk due to the extra complexity, however it is possible that

it may reduce risk. You are very much correct in stating that it is a chain design and does not add

another layer of defence.

Thanks for clarifying. I mostly agree.